Video Generation has emerged as a groundbreaking application of Deep Learning, enabling machines to create compelling videos that captivate audiences across industries.

In this comprehensive article, we’ll explore various techniques used to create stunning videos using deep learning models.

From frame-by-frame approaches to sequence-based methods, we’ll uncover the secrets behind generating realistic and imaginative video content.

So buckle up as we embark on this exciting journey!

Table of Contents

This article aims to provide a detailed study of the key concepts and methodologies involved in Video Generation using deep learning techniques.

Fundamentals of Deep Learning for Video Generation

To begin our exploration, let’s lay the groundwork by understanding the core principles of deep learning models used in Video Generation.

Understanding Generative Models in Deep Learning

At the heart of Video Generation lies Generative Models, which can create new data instances that resemble a given dataset. Two prominent generative models are:

Generative Adversarial Networks (GANs)

GANs consist of two neural networks, the generator, and the discriminator, engaged in a captivating game. The generator attempts to create realistic videos, while the discriminator aims to differentiate between real and generated videos. This adversarial process leads to the refinement of the generator’s ability to produce high-quality content.

Variational Autoencoders (VAEs)

In contrast to GANs, VAEs employ an encoder-decoder architecture that learns a low-dimensional representation (latent space) of the input data. This latent space enables smooth interpolation and exploration of different video variations.

Data Representation for Video Generation

To generate videos effectively, we need to represent the data in a manner that captures both spatial and temporal dependencies.

Frame-level Representation

Frame-level representation treats each video frame as an individual entity. This approach is suitable for short videos or when temporal coherence is not crucial.

Sequence-level Representation

Sequence-level representation considers the temporal aspect of videos, treating the entire video as a sequence of frames. This approach captures the dynamic nature of videos and enables long-range temporal dependencies.

Frame-by-Frame Video Generation Techniques

Now, let’s dive into frame-by-frame video generation techniques that create videos frame-by-frame, akin to individual images.

Convolutional Neural Networks (CNNs) for Video Generation

CNNs have been remarkably successful in image generation tasks and can be adapted for video generation.

Architecture and Working Principles

In video generation, CNNs are often used to generate individual frames by learning spatial patterns and features.

Training Process and Challenges

Training CNNs for video generation requires handling complex temporal dependencies and ensuring smooth transitions between frames.

PixelRNN and PixelCNN

PixelRNN and PixelCNN are two pioneering models that generate images sequentially and can be extended for video generation.

Generating Images Sequentially

PixelRNN generates images one pixel at a time, while PixelCNN generates images in a single pass, incorporating a masked autoregressive approach.

Extending to Video Generation

To extend these models to generate videos, we treat each frame as an image and apply the sequential generation process.

Sequence-based Video Generation Techniques

Moving forward, let’s explore sequence-based video generation techniques that focus on capturing temporal dependencies.

Recurrent Neural Networks (RNNs)

RNNs are well-suited for sequence generation tasks, making them a natural fit for video generation.

Understanding the Temporal Aspect in Videos

RNNs excel at modelling sequences by maintaining an internal state that captures information from previous time steps.

LSTM and GRU in Video Generation

LSTM and GRU, two popular variants of RNNs, have proven effective in generating long and coherent video sequences.

Transformers for Video Generation

Transformers, initially designed for natural language processing, have been successfully adapted for sequential data, including videos.

Adapting Transformers for Sequential Data

By incorporating self-attention mechanisms, transformers can effectively capture long-range temporal dependencies in videos.

Self-Attention Mechanism in Video Generation

The self-attention mechanism allows transformers to focus on relevant frames at different time steps, enhancing the quality of generated videos.

Frame Interpolation for Video Generation

Frame interpolation aims to create intermediate frames between existing frames, enhancing video smoothness and quality.

Techniques Based on Optical Flow

Optical flow-based techniques estimate motion in videos and use this information to interpolate new frames.

Motion Estimation in Videos

Optical flow algorithms track pixel movements across consecutive frames, providing insights into video dynamics.

Frame Interpolation Using Optical Flow

Utilizing optical flow, new frames can be inserted between existing frames to create smoother videos.

Deep Learning-based Frame Interpolation

Deep learning approaches have shown promising results in frame interpolation tasks.

Utilizing CNNs and RNNs for Interpolation

CNNs and RNNs are leveraged to learn the underlying patterns and motion in videos, enabling accurate frame interpolation.

Advantages and Limitations of Deep Learning Approaches

While deep learning-based methods achieve impressive results, they may struggle with complex scenes or fast motion.

Video Prediction and Future Frames Generation

Autoencoder-based Approaches

Autoencoder models have been used for predicting future frames and enhancing video generation.

Predicting Future Frames with VAEs

VAEs can predict future frames by leveraging the encoded latent space to model temporal dependencies.

Enhancing Predictions with Conditional Models

Conditional models, paired with autoencoders, enable more accurate and contextually relevant future frame predictions.

Predictive Coding Networks

Predictive coding networks offer a unique perspective on video generation, inspired by neuroscience principles.

Utilizing Predictive Coding for Video Generation

By generating predictions based on a hierarchy of representations, predictive coding networks excel at long-term predictions.

Training Strategies and Performance Evaluation

Training predictive coding networks requires careful management of the prediction hierarchy and evaluation metrics.

Evaluation Metrics for Video Generation

Assessing the quality of AI-generated videos is crucial for refining models and ensuring visually pleasing results.

Perceptual Metrics

Perceptual metrics gauge visual quality by comparing generated videos to real videos.

Assessing Visual Quality Using SSIM, PSNR, and LPIPS

Structural Similarity Index (SSIM), Peak Signal-to-Noise Ratio (PSNR), and Learned Perceptual Image Patch Similarity (LPIPS) are common metrics used for evaluation.

Challenges in Evaluating Temporal Consistency

Evaluating temporal coherence and smoothness presents challenges due to the dynamic nature of videos.

Human Perception Studies

Human perception studies provide valuable insights into the subjective quality of generated videos.

Conducting Subjective Evaluations

Crowdsourcing subjective opinions on video quality helps understand how human viewers perceive AI-generated content.

Crowdsourcing and Best Practices

Effectively conducting crowdsourcing studies requires careful design and consideration of potential biases.

Real-World Applications of Video Generation

The potential applications of Video Generation extend across various domains and industries.

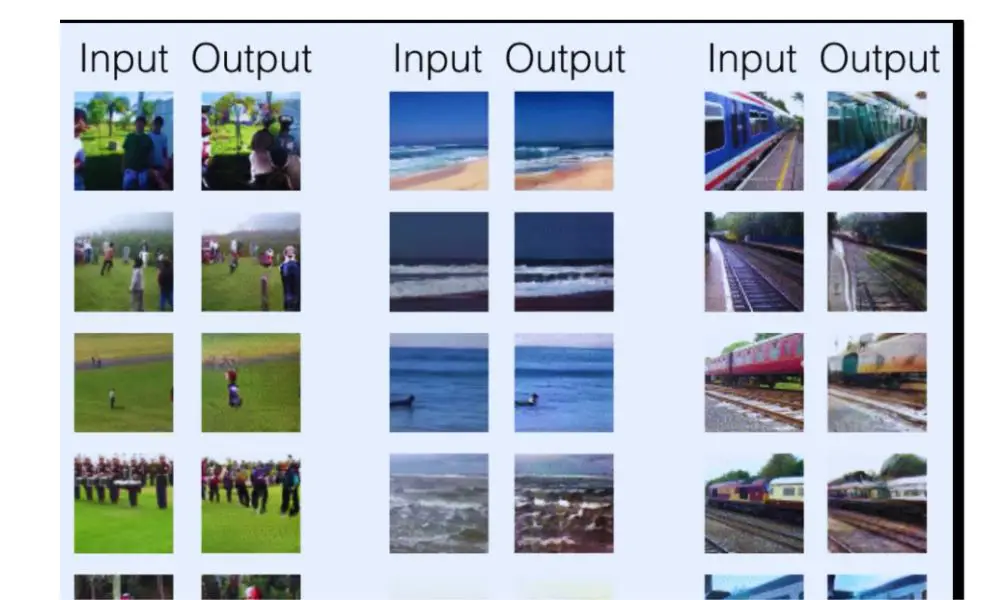

Video Synthesis for Data Augmentation

AI-generated videos are a valuable resource for enhancing data-driven models in different fields.

Improving Model Performance Through Synthetic Data

Using generated videos as additional training data can boost the performance of deep learning models.

Use Cases Across

Different Domains

Video synthesis is particularly beneficial in industries like healthcare, autonomous vehicles, and entertainment.

Video Super-Resolution

Video super-resolution techniques enhance video quality by generating high-resolution versions of low-resolution videos.

Enhancing Video Quality with Deep Learning

Deep learning models can upscale videos, revealing finer details and improving overall visual fidelity.

Real-Time Applications and Challenges

Deploying video super-resolution in real-time applications requires optimization for computational efficiency.

Challenges and Future Directions

While Video Generation has come a long way, several challenges persist, necessitating ongoing research and innovation.

Handling Long-Term Temporal Dependencies

Current models struggle to capture long-term dependencies in videos, leading to difficulties in generating coherent and realistic long sequences.

Current Limitations in Capturing Long Video Sequences

Gaps in temporal consistency may arise when generating extended videos with complex dynamics.

Research Directions for Addressing Temporal Dependencies

Advancing techniques like transformers and predictive coding may unlock solutions for handling long-range temporal dependencies.

Generalization and Diversity

Achieving diversity in generated videos while maintaining generalization across various datasets is a critical challenge.

Ensuring Diversity in Generated Videos

Preventing overfitting to specific datasets requires measures to ensure video diversity.

Improving Generalization Across Various Video Datasets

Training models with diverse datasets and employing transfer learning techniques can enhance generalization.

Video Generation in Deep Learning: Conclusion

As we conclude our journey through the realm of Video Generation in Deep Learning, we stand in awe of the vast potential this technology holds.

From creating stunning visual effects in films to revolutionizing virtual reality experiences, the impact of AI-generated videos is far-reaching and inspiring.

By mastering the techniques explored here and continuously pushing the boundaries of innovation, we are poised to create a future where AI-driven videos seamlessly blend with human creativity, transforming the world of visual storytelling.

FAQ

What is the main difference between GANs and VAEs in Video Generation?

The main difference lies in their architecture and learning approach. GANs employ an adversarial process with a generator and a discriminator, aiming to generate realistic videos. In contrast, VAEs utilize an encoder-decoder architecture to learn a latent space representation, allowing for smooth interpolation and exploration of video variations.

Can deep learning models generate videos with diverse content and styles?

Yes, with the right training data and techniques, deep learning models can produce videos with diverse content and styles. Techniques like conditional models and transfer learning can enhance the diversity of generated videos.

How can Video Generation benefit the healthcare industry?

Video Generation can be applied in healthcare for tasks like medical image synthesis, generating realistic medical data for training models, and creating interactive educational content for patients and medical professionals.

Are perceptual metrics sufficient to evaluate the quality of AI-generated videos?

Perceptual metrics like SSIM and LPIPS provide valuable insights into visual quality, but they may not fully capture temporal coherence. Combining perceptual metrics with human perception studies ensures a comprehensive evaluation.

What are the future directions in Video Generation research?

Future research in Video Generation will likely focus on addressing long-term temporal dependencies, achieving higher diversity in generated videos, and exploring innovative models inspired by neuroscientific principles to improve video prediction and synthesis.